The methodology described in this series exists in full in practice.

Every concept — the artifact chain, truth conditions, issue taxonomy, readiness computation, stage gates — can be implemented manually. Delivery teams that have built disciplined internal processes have been following versions of this methodology for years, through checklists, review rituals, and accumulated experience.

Manual implementation works. It is also slow, inconsistent, and dependent on people who have seen enough projects to know what questions to ask.

This is where tooling changes the equation.

What Automation Actually Does

The argument for AI in software delivery is not that AI can replace delivery judgment. It is that most delivery discipline fails not because of bad judgment but because of insufficient analysis.

A PM reviewing a Statement of Work before kick-off knows the questions to ask. Given enough time, they can identify the ambiguities, name the assumptions, flag the conflicts, and produce a structured gap analysis. Most PMs do not have enough time, and most review processes do not allocate it. The review happens in thirty minutes. The issues survive.

What AI does is make comprehensive analysis tractable. Reading a fifty-page SOW against a structured set of conditions, identifying every place where a truth condition is unsupported and every place where documentation contains an ambiguity or conflict — this is time-consuming work when done manually, and well-suited work for a system built to do it at scale.

AI does not make the calls. It surfaces the issues so that the delivery lead can.

The Preflight Model

Preflight operationalizes the methodology in this series as a structured AI delivery system.

The intake artifact — the SOW, brief, or discovery document — is ingested into the system. AI agents analyze it against the conditions required for each delivery stage: the problem definition, the scope boundary, the decision owner, the user descriptions, the domain model, the risk inventory, the data classification.

Every place where the artifact satisfies a truth condition, the system records evidence. Every place where it contains a gap, an ambiguity, an assumption, or a conflict, the system surfaces an issue.

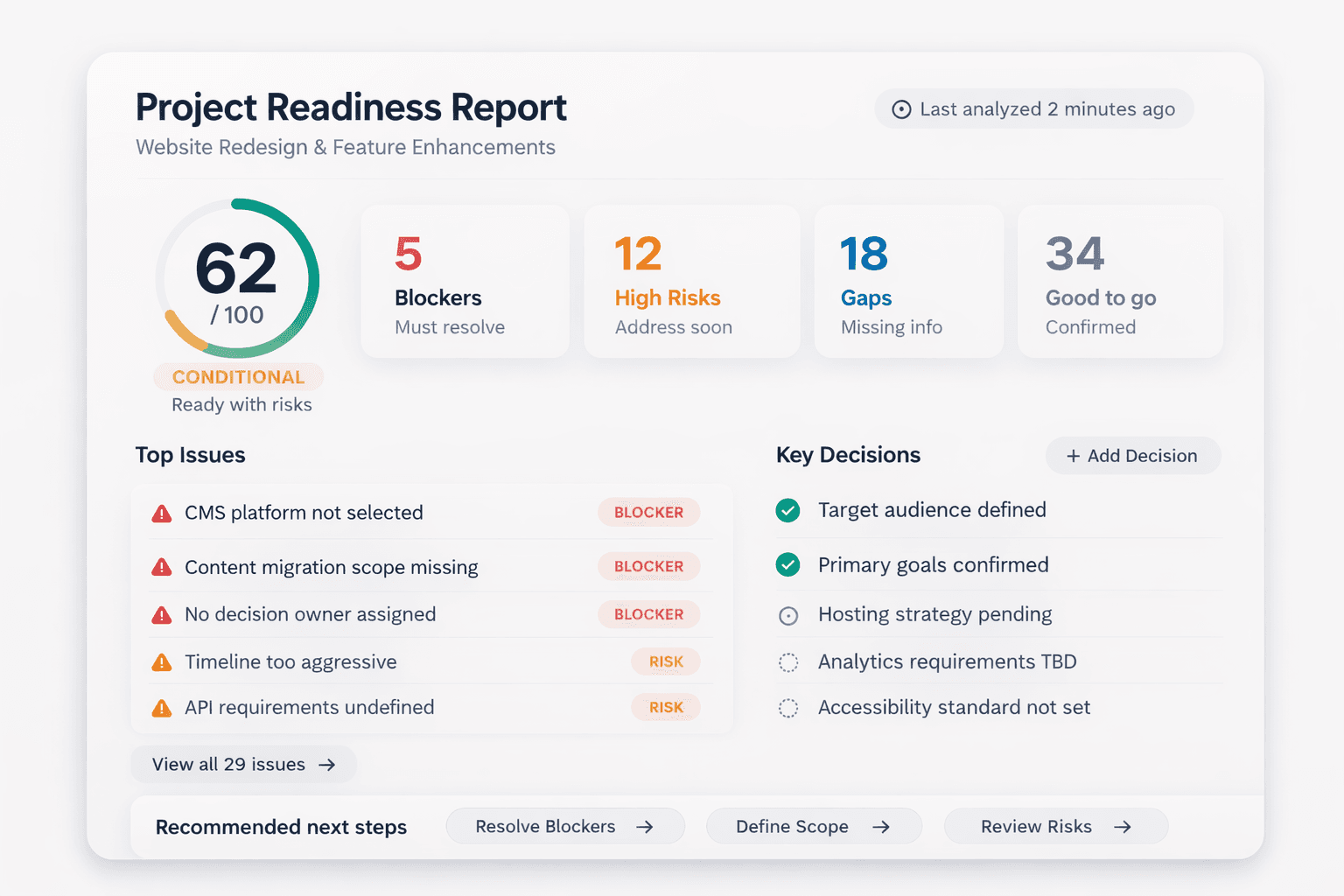

The output is not a document. It is a structured project state: which truth conditions are established, which are partial, which are missing; which issues are open; which domain readiness checks are safe, conditional, or blocked.

This is the project's actual state of knowledge — not what a checklist says was completed, but what the artifacts actually support.

Human Decisions on Machine Analysis

The analysis is automated. The decisions are not.

When Preflight identifies a high-severity conflict in the scope boundary, it does not resolve the conflict. It surfaces it for the delivery lead — with the relevant text, the severity, and a recommended action. The lead decides: escalate to the client, resolve it in the next working session, or accept the ambiguity with a documented rationale.

When readiness computation shows that a project's design domain is conditional — truth conditions established but with fragile stability due to open issues — Preflight does not stop the project. It gives the team the information they need to decide whether conditional readiness is acceptable given the project's current phase and risk tolerance.

The system adds analysis capacity. The team retains control.

This distinction matters because delivery decisions are not algorithmic. Whether to accept a risk, defer an issue, or block a gate depends on context the system cannot fully evaluate: the client relationship, the commercial stakes, the team's judgment about what is recoverable. The system can ensure those decisions are made with full information, and that the decisions are recorded so they can be revisited if circumstances change.

The Artifact Chain in a Tool

The artifact chain described in this series — from intake artifact through capability map, user journeys, information architecture, component inventory, and definition of ready — is the organizing structure of the platform.

Each artifact type has a defined role in the chain. Each artifact can be attached to the project, analyzed by AI agents, and assessed against the truth conditions it is responsible for establishing.

When a capability in the capability map cannot be traced to a user journey, and that journey has not yet been produced, the system reflects that gap in the readiness state. The design domain does not show ready when the traceability chain is incomplete.

This is what converts the artifact chain from a theoretical model into an operational one. The chain is not a best-practice diagram to be consulted and filed. It is a live record of what the project understands and what it does not.

What Changes in Practice

The practical change is not that projects become easier. It is that project teams know more earlier.

Issues that would surface in the design phase as scope disputes surface in the intake phase as named gaps. Assumptions that would drive architectural decisions in development are identified before the capability map is built. Conflicts that would become client disputes in the review phase are visible before the acceptance criteria are written.

Early visibility does not prevent all problems. But it changes the cost of addressing them.

Resolving a gap in the intake artifact takes a conversation. Resolving the consequences of that same gap after six weeks of design work built on it takes a rework cycle.

The methodology reduces downstream cost by moving resolution earlier. The tooling makes that methodology tractable to apply consistently — not just on projects with experienced PMs who have seen every failure mode before, but on any project, from the start.

That is the value of operationalizing a delivery operating system.